What is a Rate Limiter?

An API rate limiter is a mechanism used to control the number of requests a user or client can make to an API within a specific time frame. It helps prevent abuse, maintain fair usage, and ensure the API’s stability by avoiding server overload. By setting thresholds—such as a maximum number of requests per minute or hour—a rate limiter ensures that no single client can overwhelm the system, thereby protecting the backend and providing quality service for all users. It uses different algorithms or strategies to filter a request and forward it to an appropriate server that could efficiently handle the request.

Things to Consider While Designing a Rate Limiter

- Messaging Protocol: Choose a suitable protocol like gRPC or HTTP/2.0 to avoid “head-of-line blocking” (where one request holds up the others). These protocols allow more efficient parallel processing of requests.

- Message Compression (Kryo, GBP): Use tools like Kryo or GBP to compress messages, reducing their size and improving efficiency, especially when dealing with large volumes of data.

- Pull, Push, or Hybrid Strategy: To handle a “celebrity problem” scenario, consider using a pull strategy. Instead of pushing notifications to millions of followers every time a celebrity posts, let clients fetch the data when they want to see it. This avoids overwhelming the system with fan-out requests.

- Client Connection Optimization: Typically, connections involve three steps: connect, read/write, and close. In chat services, for instance, you can pause the connection and closing steps temporarily, reducing the steps from three to one and cutting down overhead when there are numerous requests.

- Graceful Degradation: In cases like chat messaging, during times of overload, you can delay or skip non-essential features like delivery or read receipts. While these events will eventually occur, they aren’t critical in real time, helping to maintain core functionality during high traffic.

- Algorithm: A proper algorithm is always the critical part while designing a rate limiter. Some of the commonly used algorithms are

-

- Token Bucket: This algorithm allows a certain number of tokens to accumulate in a bucket over time. Each request consumes a token, and if the bucket is empty, requests are rejected or delayed.

- Leaky Bucket: Similar to the token bucket, but it processes requests at a fixed rate. Excess requests are queued until they can be processed, ensuring smooth flow.

- Fixed Window Counter: It counts requests within a fixed time window. Once the limit is reached, all further requests are blocked until the next window begins.

- Sliding Window: This combines the fixed window approach with a sliding time frame, maintaining a more accurate count of requests over the past period rather than resetting at the end of each interval.

- Exponential Backoff: This approach increases the wait time between retries after a request is denied, helping to reduce server load during peak times.

- Redis-based Rate Limiting: Utilizes Redis for in-memory storage, allowing for fast, distributed rate limiting across multiple instances.

Each of these algorithms has its trade-offs, and the choice depends on the specific requirements of the system.

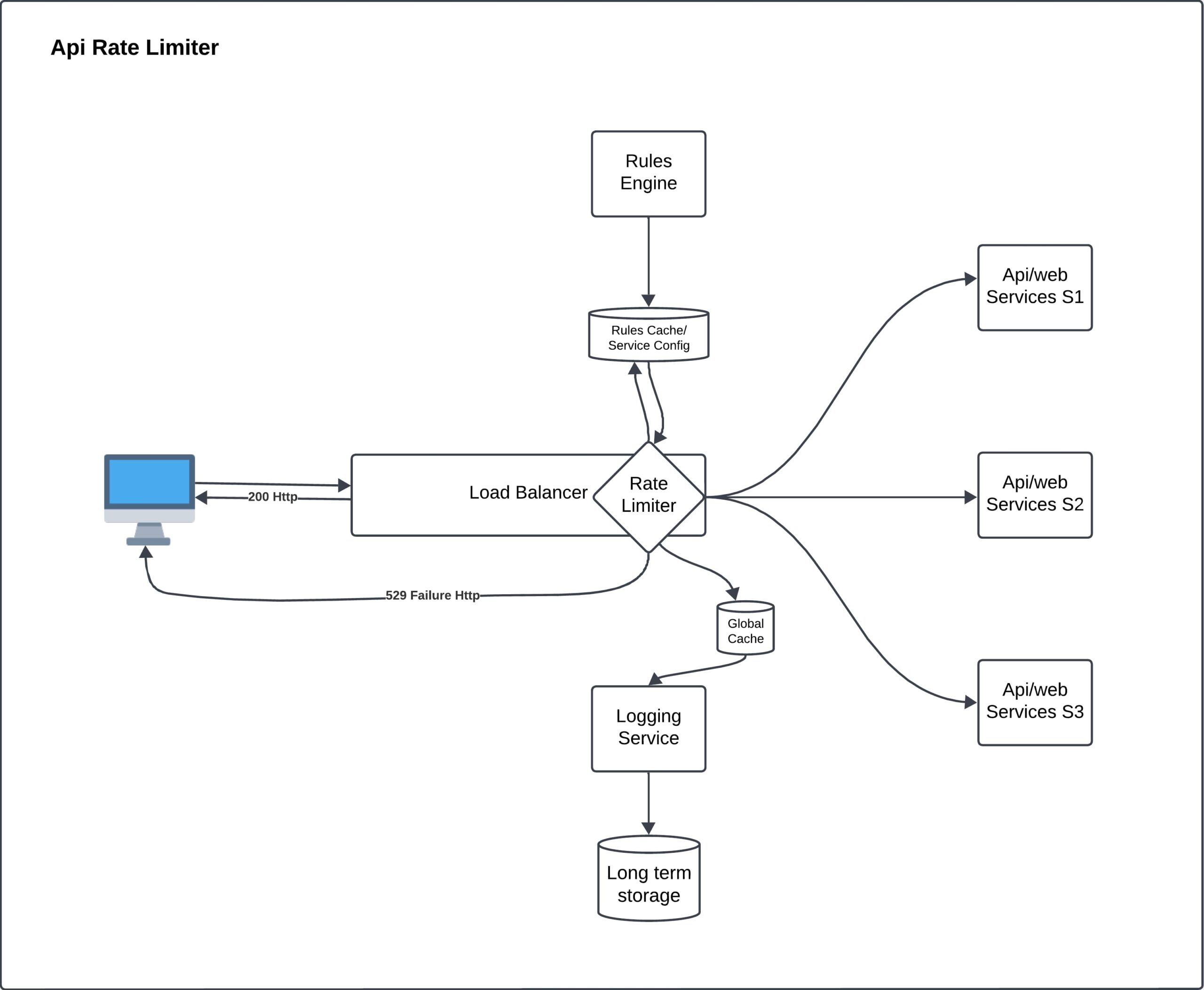

Designing a Rate Limiter

1. Client Request

Requests originate from a client, such as a user’s device, and are directed towards a server to access services. These requests are initially received by the Load Balancer.

2. Load Balancer

The load balancer is responsible for distributing incoming requests across multiple servers to maintain even processing and prevent server overload. The load balancer decides whether to forward the request to the Rate Limiter. A load balancer can forward the request from any of the N rate limitors. It has its own algorithm to choose a rate limiter.

3. Rate Limiter

The Rate Limiter is the core component that controls the flow of requests. Its job is to determine whether the request exceeds the predefined limit or falls within acceptable boundaries.

- Global Cache: The rate limiter uses a Global Cache to quickly check if a user has already reached their limit. This cache stores information like client identifiers and the count of requests made within a specific timeframe, enabling fast decision-making. If the system is using multiple rate limiters, this cache will be sharing data amongst all those so that users hitting any of the limiters (say trying from different geographical reasons using VPN) will be treated as same.

4. Rules Engine and Configuration

-

Rules Engine: This engine sets the policies that dictate how many requests a client can make and within what timeframe. For instance, it could specify a maximum of 100 requests per minute per user. It uses some specific algorithm (for example Round robin, sliding window, or bucket strategy) to determine whether the request should be processed, halted, or rejected.

-

Rules Cache/Service Config: The Rules Cache/Service Config stores rate-limiting rules for quick access by the rate limiter, allowing it to efficiently check request limits without recalculating for each request. Additionally, the cache maintains service configuration data sourced from the service registry, which helps determine the appropriate backend service (e.g., S1, S2, or S3) for each request. This dual-purpose cache streamlines both rate limiting and service routing, optimizing the system’s overall efficiency and performance.

5. Forwarding Requests

-

If the request is allowed, the rate limiter forwards it to one of the API or web services Service S1, Service S2, or Service S3. Each service corresponds to a different backend component that handles specific types of requests.

-

If the request exceeds the limit, a “529 Failure HTTP” response is sent back, indicating that the client has made too many requests. This message informs the client to reduce their request frequency.

6. Logging Service

The rate limiter is connected to a Logging Service, which records every action whether a request is allowed or denied. This logging serves several purposes:

- Audit Trail: It helps in tracing any suspicious activity.

- Analytics: It provides insights into request patterns and helps adjust rate limits when necessary.

7. Long-Term Storage

The Logging Service stores its data in Long-Term Storage. This ensures that the data can be analyzed in the future, which is useful for understanding usage trends, diagnosing system issues, or adjusting the rate limiter’s configurations.

Real-Life Analogy

Imagine a popular website that offers weather data. People from all over the world want to access this information, and if everyone made unlimited requests at the same time, the servers would crash. The rate limiter ensures that each user gets a fair share of requests without overwhelming the server, which keeps the service reliable for everyone.

Summary of Components in the Diagram:

- Client: User’s device making requests.

- Load Balancer: Directs requests to ensure even distribution.

- Rate Limiter: Checks if requests are within allowed limits.

- Rules Engine: Sets the limits for requests.

- Rules Cache/Service Config: Stores the request limit rules for quick access.

- Global Cache: Holds data about recent requests for efficient validation.

- API/Web Services (S1, S2, S3): Backend services handling the requests.

- Logging Service: Records actions for monitoring and analysis.

- Long-Term Storage: Stores logs for later analysis.

This detailed explanation provides an understanding of how each component in the rate limiting process plays a critical role in ensuring that services remain accessible, efficient, and fair for all users.